Assignment 2: Exploratory Data Analysis: Applying Visualization Tools

Due Friday, October 14, 5pm

Description

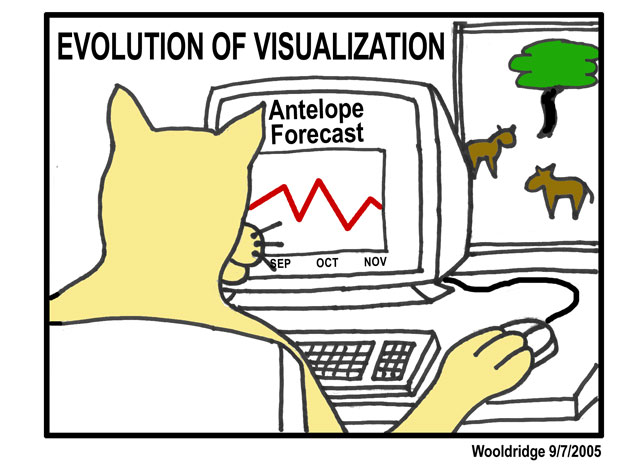

In this assignment you will use commercial, off-the-shelf visualization tools to perform exploratory data analysis on a real-world data set. The goal is to gain practice generating and investigating hypotheses through visual analysis and to learn and critique leading visualization tools.

You may work in pairs for this assignment. You'll be turning it in online at a link to be made available later.

Assignment

This assignment asks you to use visualization to analyze a data set. The data we will be using are campaign finance reports collected by the U.S. Federal Elections Committee, summarizing campaign spending and contributions for each Congressional election from 1993 to 2002. The data set is described in greater detail below.

For this assignment, you should:

- Think about what kinds of relationships you expect to find in the data. Write down at least three hypotheses about what you will find in the dataset.

- See if you can find evidence to verify or refute any of these hypotheses. The

idea is that these tools should help formulate hypotheses that would then be

analyzed with rigorous statistical tests (but we won't be doing this part in this

class). Use the analysis tools to look for, e.g.,

- relationships between pairs of variables (correlations, clusters)

- outliers of various kinds

- trends

- Use the tools to explore around the dataset and look for other unexpected kinds of relations. Note what features of the visualized data attracted your attention/focus. If the tool supports it, try to highlight or otherwise isolate the subset of the data containing an interesting feature.

- In past years we've sometimes seen that some datasets are more amenable to analysis if some of the data is transformed (e.g., by computing averages or medians, by converting numbers to percentages, etc.) If you feel you need to do this, go ahead and do it (Tableau supports this), but if it isn't needed then leave the data as is.

- Write up a 3-5 page summary of these results (not counting screen shots) of what you found -- both expected and unexpected. This can include relationships that did not appear even though you thought they might. Try to report on at least one surprising piece of information. You should include some screen shots to help convey your discoveries (transitioning from analysis to communication!) but please scale them so they aren't too large.

- In your summary, comment on the tools you tried. What features did you find useful? Which ones were intuitive to use, and which were hard to understand? Was there any functionality that the tool did not have that you wished it did? In other words, how would you improve on the tool?

- To get a better idea how to do this assignment, you may find it helpful to look at student writeups from the previous course.

Data

The data set being analyzed is a financial summary of U.S. campaign finance contributions and spending for each 2-year Congressional election cycle between 1993 and 2002. These data sets are published by the United States Federal Election Commission, and include summary information for every candidate for Congressional office (both the Senate and House of Representatives). The data was downloaded from: http://www.fec.gov/finance/disclosure/ftpsum.shtml

The data can be downloaded here:

The data is represented as a CSV (comma separated values) text file. In addition to being readable by both of the visualization tools we will be using, it can also be directly imported into Microsoft Excel. You are encouraged to open the data up and take a look at it, either in Excel or in a text editor, to get a feel for its structure and scale, before loading into one of the visualization tools.

Each row of data represents a single candidate running for office in the given year (years are listed using the last year of the election cycle), and contains information about contributions, expenditures, party affiliation, state, congressional district (or none for the Senate), and outcome (win/loss/runoff). The data set is fairly large, containing over 9000 rows. The only difference between the data set being given to you and the ones on the FEC website is that we have concatenated data for multiple election cycles and added a year column, enabling any number of trend analyses. As you'll undoubtedly notice, the data is highly multidimensional, with a large number of columns. The FEC website includes a detailed description of each column.

Given the large amount of data, a large number of hypotheses could be generated and tested. One might want to investigate spending and contributions at an aggregated level, breaking down the data by political parties at the national level. Alternatively, it is equally valid to filter out many of the attributes or entire sections of the data and explore, say, finances at a finer granularity, for example by investigating one's own state and local and neighboring congressional districts.

Tools

We will be using three visualization software packages: Spotfire, Tableau, and Eureka/TableLens. All three are part of companies spun off directly from information visualization research efforts: Spotfire from the Human-Computer Interaction Laboratory at the University of Maryland, Tableau from Stanford University, and TableLens from Xerox PARC. A brief tutorial for these programs will be given in class.

We have licenses that allow you to download the Tableau and Spotfire software onto your own machines, and we'll send you email about how to activate these. In addition, an old version of Spotfire can be found on the lab machines. Eureka is currently available only on the lab machines.

You are required to use at least two of the three programs in this assignment (i.e., even if you prefer one package, you should use the other to explore at least one hypothesis). Based on your experiences, please include some comparison of the two tools, including relative strengths and weaknesses, in your critique.